Cognitive Absorption is not Cognitive Load. The difference matters.

The most popular metric in platform engineering measures the symptom, not the cause.

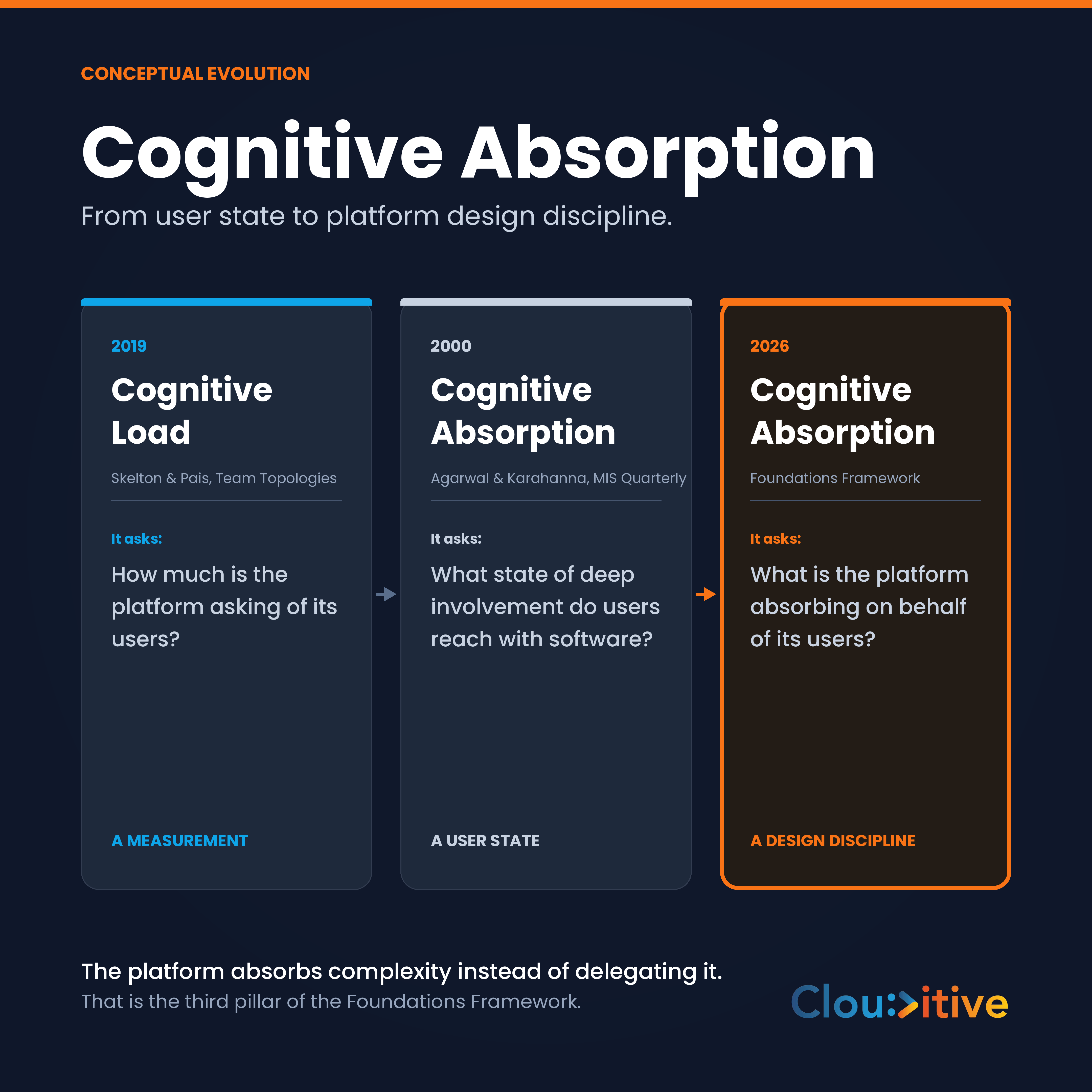

Cognitive Load, as Skelton and Pais introduced it in Team Topologies (2019), tells you the load on developers is too high. It does not tell you why, or what to do about it. The metric is diagnostic. It surfaces a problem. It does not name the discipline that solves the problem.

I needed a concept that named the design intent, not the diagnostic. So I went outside platform engineering.

The 2000 paper that nobody in platform engineering reads

In 2000, Ritu Agarwal and Elena Karahanna published Cognitive Absorption and Beliefs About Information Technology Usage in MIS Quarterly. They defined Cognitive Absorption as a state of deep involvement with software. Five dimensions: temporal dissociation, focused immersion, heightened enjoyment, control, curiosity. The paper was a behavioral construct in information systems research. It described what users feel when software disappears and the work emerges.

For twenty six years the concept stayed inside academic IS research. It never crossed into platform engineering vocabulary because platform engineering was not yet a recognized discipline when the paper was published.

I extended it from a user state to a platform design discipline. That extended version lives in the Foundations Framework as the third pillar.

The conceptual move is simple to state and operationally consequential.

Cognitive Load asks one question. Cognitive Absorption asks a different one.

| Concept | Year | Origin | Question it answers |

|---|---|---|---|

| Cognitive Load | 2019 | Skelton and Pais, Team Topologies | How much is the platform asking of its users? |

| Cognitive Absorption (user state) | 2000 | Agarwal and Karahanna, MIS Quarterly | What state of deep involvement do users reach with software? |

| Cognitive Absorption (design discipline) | 2026 | Foundations Framework | What is the platform absorbing on behalf of its users? |

The first is a measurement. The second is a behavioral observation about the user. The third is a design intent for the platform team.

When you ask only the first question, you can detect the symptom. You cannot prescribe the cure. The platform team learns the developer is overloaded but not what work the platform should be doing instead.

When you ask the third question, the platform team has a clear mandate. Identify what the user is currently doing that the platform should be doing. Build that capability. Measure whether developers route through it.

This shift is not semantic. It changes what the platform team is accountable for.

Why this matters operationally

Shift left was a useful idea that became a damaging implementation. The intent was correct: catch issues earlier, when they are cheaper to fix. The implementation, in many organizations, dumped security, compliance, infrastructure, and operational concerns onto the developer's desk. The cognitive load that platform teams were supposed to absorb was instead delegated.

The METR 2025 study on AI in software development surfaced a parallel pattern. Senior developers using AI coding assistants in unfamiliar codebases were nineteen percent slower than baseline, even when they reported feeling faster. The AI offered help. The user had to evaluate, integrate, and verify. The cognitive cost moved from typing the code to validating the AI output. Net effect, slower delivery and lower quality.

In both cases, the same mechanism operates. Complexity that should be absorbed by an upstream system gets delegated downstream. The downstream worker reports satisfaction (the tool felt useful, the documentation existed) while the actual outcome degrades.

Cognitive Absorption names the correction. The platform absorbs complexity. The developer regains the time to do the work they were hired for.

The three signals I track

Cognitive Absorption is operational. It needs measurable signals or it remains aspirational language. Three signals, used together, give the platform team a useful read.

1. Flow state retention

Time to first context switch under standard work.

When a developer starts a focused task, how long until they have to leave the task to chase something the platform should have provided. A failing local build that requires Slack triage. A missing credential. An unclear deployment path. Each context switch is a moment where the platform failed to absorb a concern.

Track it longitudinally. The number itself matters less than the trend. A platform investment that does not move flow state retention up over a quarter is not absorbing.

2. Context switch cost

Time to resume productive work after interruption.

Even when context switches are necessary (a real incident, a code review request from a colleague), the platform shapes how expensive the switch is. A developer with reliable bookmarks, fast local environments, and well structured documentation can return to flow in minutes. A developer working against fragile tooling can lose half a day re entering the problem.

This signal is sensitive to platform quality. It also surfaces toolchain rot before it becomes an outage.

3. Paved road compliance under pressure

Rate at which users default to the platform's preferred path when deadlines tighten.

This is the diagnostic. The paved road is the path the platform team built. It is the templated service, the golden CI pipeline, the standard deployment workflow. Under normal conditions, developers may follow it because it is convenient. The real test comes under pressure.

When the deadline tightens and the team is shipping fast, do they stay on the paved road or do they route around it?

If they route around, the platform is present but not absorbing. The shortcut they took was faster than the platform's preferred path. That is the data. It is not a developer failure. It is a platform design failure.

I have seen platforms that scored well on cognitive load surveys collapse on this metric. Developers liked the platform when there was time. They abandoned it when there was not. The platform was a peer, not an absorber.

What good looks like in the wild

A platform team that takes Cognitive Absorption seriously will reorganize its work along three patterns.

Pattern one. Default path beats documented path. The team stops investing primarily in documentation and starts investing in defaults. Every new service, every new pipeline, every new database creation should arrive in a state that requires zero developer decisions to be production ready. Documentation supports edge cases. The default is the common case.

Pattern two. Failure mode design. The platform team treats every developer interruption as a design failure. A flaky test, a slow build, a missing environment variable. Each is logged, classified, and reduced or absorbed. The platform team is accountable for the second order effect, not just the first order capability.

Pattern three. Adversarial review of metrics. Quarterly, the platform team challenges its own measurements. Are the cognitive load scores honest, or are they artifacts of the survey instrument? Are the paved road compliance numbers true, or do they exclude the cases that matter most? This is the Signal Integrity pillar of the Foundations Framework, and it pairs with Cognitive Absorption. A platform team without adversarial measurement review will eventually believe its own dashboards.

The boundary with Team Topologies

I respect the Team Topologies work. The Cognitive Load concept is necessary. It surfaces a problem that older platform engineering vocabulary missed. The point of Cognitive Absorption is not to replace Cognitive Load. The point is to name the discipline that responds to it.

In practice, I run both:

- Cognitive Load surveys quarterly to baseline developer experience.

- Cognitive Absorption signals continuously to track whether the platform is absorbing the load surfaced by the surveys.

The survey is the diagnostic. The three signals are the treatment effect.

The deeper problem with shift left

Shift left worked when platform teams existed to absorb the shifted concerns. It failed when the shifted concerns landed on developers who had no upstream support.

The Foundations Framework treats this distinction as load bearing. We sequence Platform Foundation Build before any shift left initiative. Without the platform team operating as an absorber, every shift left effort is a delegation effort wearing a different hat.

I have walked into engineering organizations where the security team had successfully shifted scanning left, the compliance team had successfully shifted attestation left, and the operations team had successfully shifted on call left. The developer was the recipient of all three shifts. Predictably, deployment frequency dropped, change failure rate rose, and the senior engineers started leaving.

Cognitive Absorption is the corrective. The platform absorbs. The developer focuses. Velocity returns.

What did your team route around the platform last sprint?

This is the question I open every Foundations Assessment with. The answers are diagnostic.

If the team routed around the deployment pipeline because the pipeline was slow, the platform did not absorb that concern. If the team copy pasted a Terraform module instead of using the standard one because the standard one had unclear documentation, the platform did not absorb. If the team added a manual cron job instead of using the scheduling capability the platform provides because the platform's version required three approvals, the platform did not absorb.

Each instance is a data point. The pattern across instances is the design failure that Cognitive Absorption names.

How this fits the Foundations Framework

Cognitive Absorption is the third of five pillars in the Foundations Framework, the proprietary platform engineering method that runs every Clouditive engagement. The first two pillars (Delivery Reliability and Signal Integrity) handle measurement honesty. The fourth and fifth (Security and Compliance by Default, Operational Accountability) handle distributed responsibility. The third pillar, Cognitive Absorption, is what the platform team owes its users.

It is the pillar that distinguishes a platform team from a tooling team. A tooling team builds tools. A platform team absorbs complexity.

How to start auditing your platform's Cognitive Absorption

You do not need a six month measurement program to start. The first audit can run in two weeks with no new tooling. Here is the sequence I use in the Horizon phase of every Clouditive engagement.

Week one. Capture the routes around. Pick five recent sprints. For each sprint, ask each squad two questions in writing. What did you build that the platform should have provided. What did you skip from the platform because it slowed you down. The answers are the qualitative baseline. Do not aggregate yet, treat each instance as a primary source. Ten squads times two answers times five sprints is one hundred data points. Patterns emerge fast.

Week two. Instrument the three signals. Flow state retention is recoverable from existing telemetry. CI logs show how often a build fails locally and forces a context switch. Slack export shows interruption frequency in development hours. Context switch cost can be approximated by time between code editor focus events if your IDE telemetry is on. Paved road compliance under pressure is measurable from deployment metadata. For every production deployment in the last quarter, classify whether the path used was the canonical one or a custom variant. Build the ratio. Slice it by team and by sprint pressure level.

Week three. Triangulate against Cognitive Load survey. Run the standard Team Topologies cognitive load survey alongside the three signals. The interesting pattern is divergence. Teams reporting high cognitive load with high paved road compliance are signaling that the platform itself is too complex. Teams reporting low cognitive load with low paved road compliance are signaling that the platform is invisible, with developers operating outside it without complaint. Each pattern points to a different intervention.

Week four. Pick one absorption target. Resist the urge to fix everything. Pick the one place where developers most consistently route around the platform under pressure. Build the absorber. Measure the paved road compliance ratio for that path before and after. The first absorber sets the cultural expectation for the platform team. After three or four successful absorbers, the team has a discipline, not a backlog.

This sequence is the public version of what we run inside Horizon. The deeper rubric is reserved for engagement, but the pattern is reproducible by any platform team that decides to operate as an absorber.

Frequently asked questions

Is Cognitive Absorption a metric or a discipline?

Both. The 2000 user state version is a behavioral construct that can be measured directly with surveys. The 2026 design discipline version is operationalized through three signals (flow state retention, context switch cost, paved road compliance under pressure) that the platform team owns. The discipline is the platform team's accountability. The metrics are the feedback loop.

Does Cognitive Absorption replace Cognitive Load?

No. Cognitive Load is the diagnostic. Cognitive Absorption is the design intent that responds to it. Run both. The survey surfaces the problem. The three signals tell you whether your platform investment is fixing it.

Where does this concept live in published research?

The user state version comes from Agarwal and Karahanna 2000 in MIS Quarterly. The platform design discipline extension is original to the Foundations Framework, authored by Mat Caniglia at Clouditive. Both are credited explicitly when the framework is referenced.

Is the operating manual public?

The framework's principles, pillars, phases, and three persona platform user concept are public. The detailed operating manual, the rubrics, the cell by cell guidance, and the diagnostic instruments are reserved for clients in engagement. The first step into the method is the Foundations Assessment.

Read more

If you want to see how Cognitive Absorption sits inside a structured engagement model, read about the Foundations Framework or take the free Platform Score to baseline your own platform on the same five pillars Clouditive uses with clients.

If you are interested in the AI productivity adjacent research that influenced this work, read my analysis of the 2025 DORA report on AI and platform engineering and the discussion of measuring AI productivity in engineering teams.

References

- Agarwal, R., and Karahanna, E. (2000). Time flies when you're having fun: Cognitive absorption and beliefs about information technology usage. MIS Quarterly, 24(4), 665 to 694. https://www.jstor.org/stable/3250951

- Skelton, M., and Pais, M. (2019). Team Topologies: Organizing Business and Technology Teams for Fast Flow. IT Revolution Press.

- DORA. (2025). State of AI Assisted Software Development. https://dora.dev/dora-report-2025/

- METR. (2025). Measuring the impact of early 2025 AI on experienced open source developer productivity. https://metr.org/blog/2025-07-10-early-2025-ai-experienced-os-dev-study/

Found this useful? Share it with your network.

Mat Caniglia

LinkedInFounder of Clouditive. 18+ years transforming engineering organizations across LATAM and globally through Developer Experience consulting.

33 articles published